Note

This English README is automatically generated by the markdown translation plugin in this project, and may not be 100% correct.

When installing dependencies, please strictly select the versions specified in requirements.txt.

pip install -r requirements.txt

If you like this project, please give it a Star. If you've come up with more useful academic shortcuts or functional plugins, feel free to open an issue or pull request.

To translate this project to arbitary language with GPT, read and run multi_language.py (experimental).

Note:

- Please note that only the function plugins (buttons) marked in red support reading files. Some plugins are in the drop-down menu in the plugin area. We welcome and process any new plugins with the highest priority!

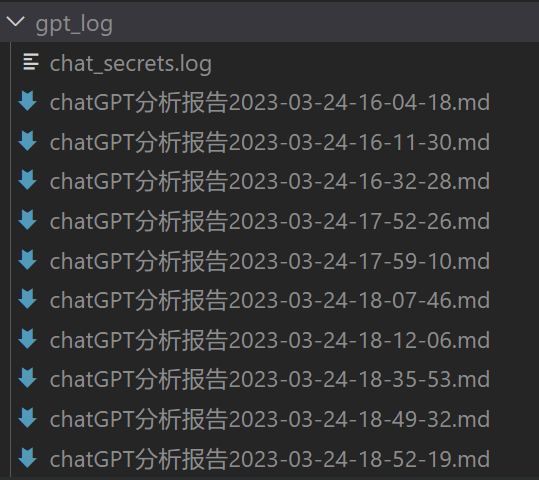

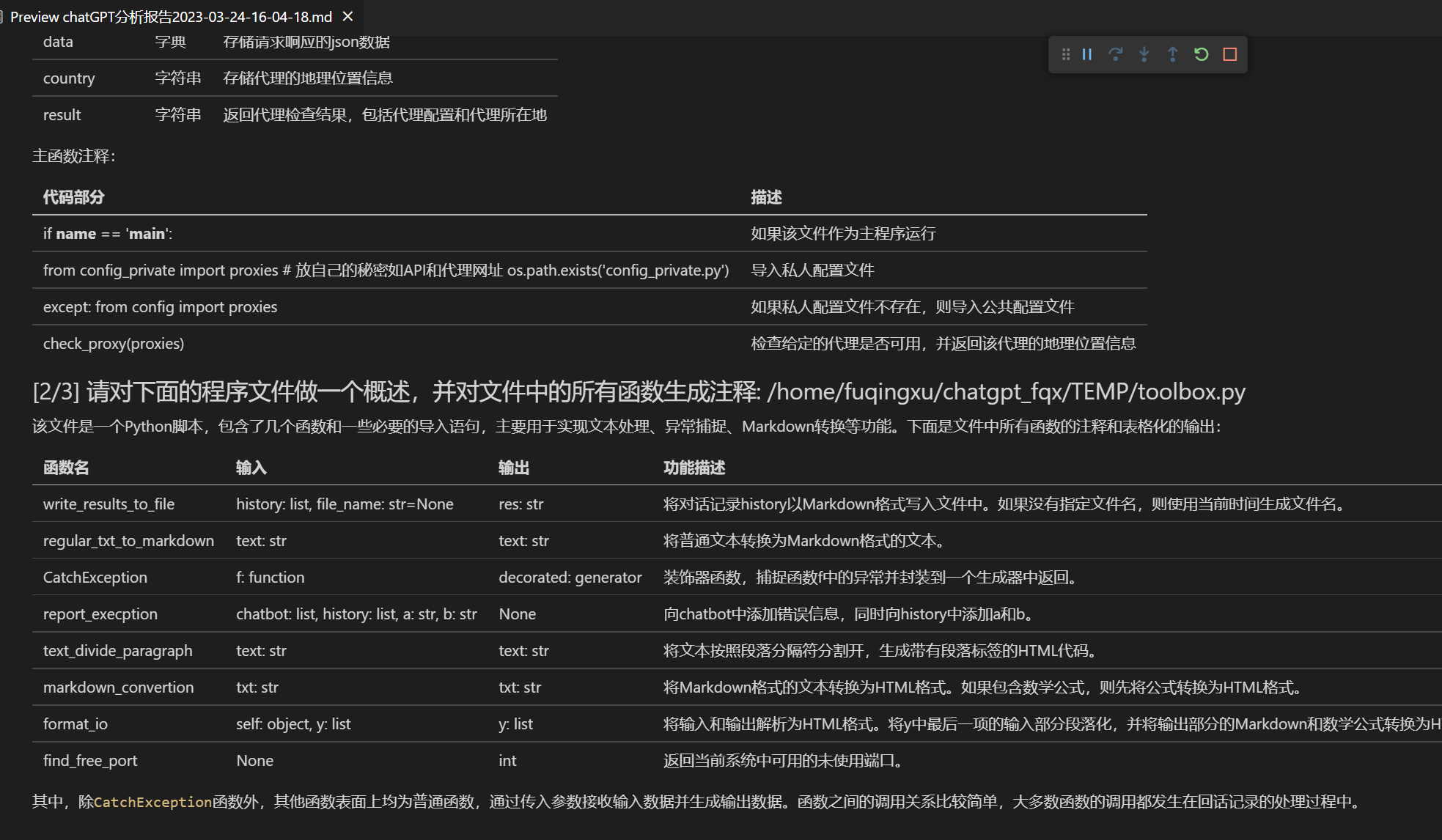

- The function of each file in this project is detailed in the self-translation analysis

self_analysis.md. With version iteration, you can also click on related function plugins at any time to call GPT to regenerate the project's self-analysis report. Common questions are summarized in thewiki. Installation method.- This project is compatible with and encourages trying domestic large language models such as chatglm, RWKV, Pangu, etc. Multiple API keys are supported and can be filled in the configuration file like

API_KEY="openai-key1,openai-key2,api2d-key3". When temporarily changingAPI_KEY, enter the temporaryAPI_KEYin the input area and press enter to submit, which will take effect.

| Function | Description |

|---|---|

| One-click polishing | Supports one-click polishing and one-click searching for grammar errors in papers. |

| One-click Chinese-English translation | One-click Chinese-English translation. |

| One-click code interpretation | Displays, explains, generates, and adds comments to code. |

| Custom shortcut keys | Supports custom shortcut keys. |

| Modular design | Supports custom powerful function plug-ins, plug-ins support hot update. |

| Self-program profiling | [Function plug-in] One-click understanding of the source code of this project |

| Program profiling | [Function plug-in] One-click profiling of other project trees in Python/C/C++/Java/Lua/... |

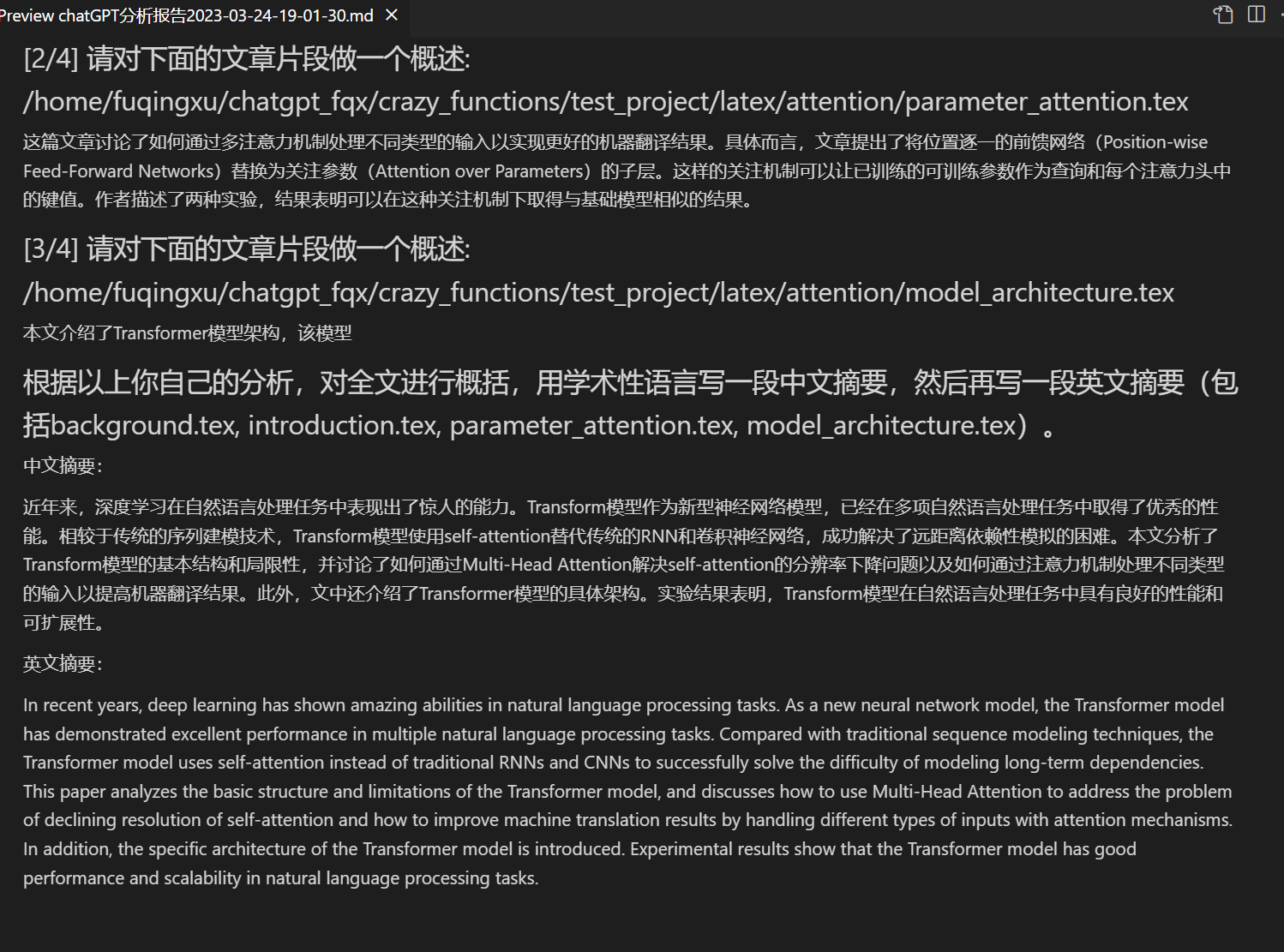

| Reading papers, translating papers | [Function Plug-in] One-click interpretation of latex/pdf full-text papers and generation of abstracts. |

| Latex full-text translation, polishing | [Function plug-in] One-click translation or polishing of latex papers. |

| Batch annotation generation | [Function plug-in] One-click batch generation of function annotations. |

| Markdown Chinese-English translation | [Function plug-in] Have you seen the README in the five languages above? |

| Chat analysis report generation | [Function plug-in] Automatically generate summary reports after running. |

| PDF full-text translation function | [Function plug-in] PDF paper extract title & summary + translate full text (multi-threaded) |

| Arxiv Assistant | [Function plug-in] Enter the arxiv article url and you can translate abstracts and download PDFs with one click. |

| Google Scholar Integration Assistant | [Function plug-in] Given any Google Scholar search page URL, let GPT help you write relatedworks |

| Internet information aggregation+GPT | [Function plug-in] One-click let GPT get information from the Internet first, then answer questions, and let the information never be outdated. |

| Formula/image/table display | Can display formulas in both tex form and render form, support formulas and code highlighting. |

| Multi-threaded function plug-in support | Supports multi-threaded calling of chatgpt, and can process massive text or programs with one click. |

| Start Dark Gradio theme | Add /?__theme=dark after the browser URL to switch to the dark theme. |

| Multiple LLM models support, API2D interface support | The feeling of being served by GPT3.5, GPT4, Tsinghua ChatGLM, and Fudan MOSS at the same time must be great, right? |

| More LLM model access, support huggingface deployment | Add Newbing interface (New Bing), introduce Tsinghua Jittorllms to support LLaMA, RWKV and Panguα |

| More new feature displays (image generation, etc.)…… | See the end of this document for more... |

- New interface (modify the LAYOUT option in

config.pyto switch between "left and right layout" and "up and down layout")

- polishing/correction

- If the output contains formulas, they will be displayed in both

texand render form, making it easy to copy and read.

- Tired of reading the project code? ChatGPT can explain it all.

- Multiple large language models are mixed, such as ChatGLM + OpenAI-GPT3.5 + API2D-GPT4.

- Download the project

git clone https://github.com/binary-husky/gpt_academic.git

cd gpt_academic- Configure the API_KEY

Configure the API KEY in config.py, special network environment settings.

(P.S. When the program is running, it will first check if there is a private configuration file named config_private.py and use the configurations in it to override the same configurations in config.py. Therefore, if you can understand our configuration reading logic, we strongly recommend that you create a new configuration file named config_private.py next to config.py and transfer (copy) the configurations in config.py to config_private.py. config_private.py is not controlled by git and can make your private information more secure. P.S. The project also supports configuring most options through environment variables. Please refer to the format of docker-compose file when writing. Reading priority: environment variables > config_private.py > config.py)

- Install the dependencies

# (Option I: If familiar with python) (python version 3.9 or above, the newer the better), note: use official pip source or Ali pip source, temporary switching method: python -m pip install -r requirements.txt -i https://mirrors.aliyun.com/pypi/simple/

python -m pip install -r requirements.txt

# (Option II: If not familiar with python) Use anaconda, the steps are similar (https://www.bilibili.com/video/BV1rc411W7Dr):

conda create -n gptac_venv python=3.11 # create anaconda environment

conda activate gptac_venv # activate anaconda environment

python -m pip install -r requirements.txt # this step is the same as pip installationIf you need to support Tsinghua ChatGLM/Fudan MOSS as a backend, click to expand

[Optional step] If you need to support Tsinghua ChatGLM/Fudan MOSS as a backend, you need to install more dependencies (prerequisites: familiar with Python + used Pytorch + computer configuration is strong enough):

# [Optional Step I] Support Tsinghua ChatGLM. Tsinghua ChatGLM remarks: if you encounter the "Call ChatGLM fail cannot load ChatGLM parameters" error, refer to this: 1: The default installation above is torch + cpu version, to use cuda, you need to uninstall torch and reinstall torch + cuda; 2: If the model cannot be loaded due to insufficient local configuration, you can modify the model accuracy in request_llms/bridge_chatglm.py, and change AutoTokenizer.from_pretrained("THUDM/chatglm-6b", trust_remote_code=True) to AutoTokenizer.from_pretrained("THUDM/chatglm-6b-int4", trust_remote_code = True)

python -m pip install -r request_llms/requirements_chatglm.txt

# [Optional Step II] Support Fudan MOSS

python -m pip install -r request_llms/requirements_moss.txt

git clone https://github.com/OpenLMLab/MOSS.git request_llms/moss # When executing this line of code, you must be in the root directory of the project

# [Optional Step III] Make sure the AVAIL_LLM_MODELS in the config.py configuration file includes the expected models. Currently supported models are as follows (the jittorllms series only supports the docker solution for the time being):

AVAIL_LLM_MODELS = ["gpt-3.5-turbo", "api2d-gpt-3.5-turbo", "gpt-4", "api2d-gpt-4", "chatglm", "newbing", "moss"] # + ["jittorllms_rwkv", "jittorllms_pangualpha", "jittorllms_llama"]- Run it

python main.py

```5. Test Function Plugin- Test function plugin template function (ask GPT what happened today in history), based on which you can implement more complex functions as a template Click "[Function Plugin Template Demo] Today in History"

## Installation - Method 2: Using Docker

1. ChatGPT Only (Recommended for Most People)

``` sh

git clone https://github.com/binary-husky/gpt_academic.git # Download project

cd gpt_academic # Enter path

nano config.py # Edit config.py with any text editor, configure "Proxy", "API_KEY" and "WEB_PORT" (e.g. 50923), etc.

docker build -t gpt-academic . # Install

#(Last step - option 1) In a Linux environment, use `--net=host` for convenience and speed.

docker run --rm -it --net=host gpt-academic

#(Last step - option 2) On macOS/windows environment, only -p option can be used to expose the container's port (e.g. 50923) to the port of the main machine.

docker run --rm -it -e WEB_PORT=50923 -p 50923:50923 gpt-academic

- ChatGPT + ChatGLM + MOSS (Requires Docker Knowledge)

# Modify docker-compose.yml, delete Plan 1 and Plan 3, and keep Plan 2. Modify the configuration of Plan 2 in docker-compose.yml, refer to the comments in it for configuration.

docker-compose up- ChatGPT + LLAMA + Pangu + RWKV (Requires Docker Knowledge)

# Modify docker-compose.yml, delete Plan 1 and Plan 2, and keep Plan 3. Modify the configuration of Plan 3 in docker-compose.yml, refer to the comments in it for configuration.

docker-compose up-

How to Use Reverse Proxy URL/Microsoft Cloud Azure API Configure API_URL_REDIRECT according to the instructions in 'config.py'.

-

Deploy to a Remote Server (Requires Knowledge and Experience with Cloud Servers) Please visit Deployment Wiki-1

-

Using WSL2 (Windows Subsystem for Linux) Please visit Deployment Wiki-2

-

How to Run Under a Subdomain (e.g.

http://localhost/subpath) Please visit FastAPI Running Instructions -

Using docker-compose to Run Read the docker-compose.yml and follow the prompts.

- Custom New Shortcut Buttons (Academic Hotkey)

Open

core_functional.pywith any text editor, add an entry as follows and restart the program. (If the button has been successfully added and is visible, the prefix and suffix can be hot-modified without having to restart the program.) For example,

"Super English-to-Chinese": {

# Prefix, which will be added before your input. For example, used to describe your requests, such as translation, code explanation, polishing, etc.

"Prefix": "Please translate the following content into Chinese and then use a markdown table to explain the proprietary terms that appear in the text:\n\n",

# Suffix, which is added after your input. For example, with the prefix, your input content can be surrounded by quotes.

"Suffix": "",

},

- Custom Function Plugins

Write powerful function plugins to perform any task you can think of, even those you cannot think of. The difficulty of plugin writing and debugging in this project is very low. As long as you have a certain knowledge of Python, you can implement your own plug-in functions based on the template we provide. For details, please refer to the Function Plugin Guide.

- Conversation saving function. Call

Save current conversationin the function plugin area to save the current conversation as a readable and recoverable HTML file. In addition, callLoad conversation history archivein the function plugin area (dropdown menu) to restore previous sessions. Tip: ClickingLoad conversation history archivewithout specifying a file will display the cached history of HTML archives, and clickingDelete all local conversation historywill delete all HTML archive caches.

- Report generation. Most plugins will generate work reports after execution.

- Modular function design with simple interfaces that support powerful functions.

- This is an open-source project that can "self-translate".

- Translating other open-source projects is a piece of cake.

- A small feature decorated with live2d (disabled by default, need to modify

config.py).

- Added MOSS large language model support.

- OpenAI image generation.

- OpenAI audio parsing and summarization.

- Full-text proofreading and error correction of LaTeX.

- version 3.5(Todo): Use natural language to call all function plugins of this project (high priority).

- version 3.4(Todo): Improve multi-threading support for chatglm local large models.

- version 3.3: +Internet information integration function.

- version 3.2: Function plugin supports more parameter interfaces (save conversation function, interpretation of any language code + simultaneous inquiry of any LLM combination).

- version 3.1: Support simultaneous inquiry of multiple GPT models! Support api2d, and support load balancing of multiple apikeys.

- version 3.0: Support chatglm and other small LLM models.

- version 2.6: Refactored plugin structure, improved interactivity, and added more plugins.

- version 2.5: Self-updating, solving the problem of text overflow and token overflow when summarizing large engineering source codes.

- version 2.4: (1) Added PDF full-text translation function; (2) Added the function of switching the position of the input area; (3) Added vertical layout option; (4) Optimized multi-threading function plugins.

- version 2.3: Enhanced multi-threading interactivity.

- version 2.2: Function plugin supports hot reloading.

- version 2.1: Collapsible layout.

- version 2.0: Introduction of modular function plugins.

- version 1.0: Basic functions.

gpt_academic Developer QQ Group-2: 610599535

- Known Issues

- Some browser translation plugins interfere with the front-end operation of this software.

- Both high and low versions of gradio can lead to various exceptions.

Many other excellent designs have been referenced in the code, mainly including:

# Project 1: THU ChatGLM-6B:

https://github.com/THUDM/ChatGLM-6B

# Project 2: THU JittorLLMs:

https://github.com/Jittor/JittorLLMs

# Project 3: Edge-GPT:

https://github.com/acheong08/EdgeGPT

# Project 4: ChuanhuChatGPT:

https://github.com/GaiZhenbiao/ChuanhuChatGPT

# Project 5: ChatPaper:

https://github.com/kaixindelele/ChatPaper

# More:

https://github.com/gradio-app/gradio

https://github.com/fghrsh/live2d_demo